We are proud to announce that Scailable has been acquired by Network Optix.

For the announcement on the Network Optix website, move here.

Scailable AI Manager on AI-BLOX

To ensure that our AI manger provides the fastest and safest modular AI pipeline on any supported device, we don’t take supporting devices lightly; we want to ensure full support of the whole platform, and we need to be convinced that the selected hardware tailors to edge AI use-cases. So, today we are happy to announce that we added another OEM to our supported hardware list: AI-BLOX.

What’s AI-BLOX?

Since edge AI requires specific hardware, the rollout of edge AI applications is often challenging. Therefore AI-BLOX has developed modular hardware blox to accelerate your edge AI application rollout. AI-BLOX provides a unique, modular, hardware approach to providing Edge AI hardware. We have experimented extensively with their device(s) and they are sturdy, easy to setup, and enable extremely rapid iterations of edge AI solutions from a hardware perspective. The device also features colorful modules that are easily customizable to support any use case:

For additional information see:

Why does Scailable support AI-BLOX?

As you might have noticed, we are selective in the hardware we support. We need to ensure a highly efficient AI/ML pipeline, from I/O to model execution, on the full platform. Hence, the Scailable AI Manager must properly interface with all the hardware components on the device (accelerators etc.), and we need to be confident that the device is suited for specific edge AI solutions.

With their modular design, AI-BLOX ticks all of these boxes. Thus, after extensive testing, we decided to add them to our supported devices. The modular take on hardware that AI-BLOX brings to the table ideally aligns with our modular approach to edge AI software: AI-BLOX separates accelerators and physical I/O modularly from the surrounding platform making it easy to quickly iterate edge AI solutions. The Scailable AI Manager separates the AI inference pipeline both from the underlying hardware (no need to compile your models specifically for your target), and from the AI model itself. This allows for extremely fast iterations of AI models on the edge by providing a clear separation of the model development and (embedded) pipeline development cycles.

Where can I learn more?

If you are interested in running your Scailable supported edge AI application on AI-BLOX hardware, it is easy to get started:

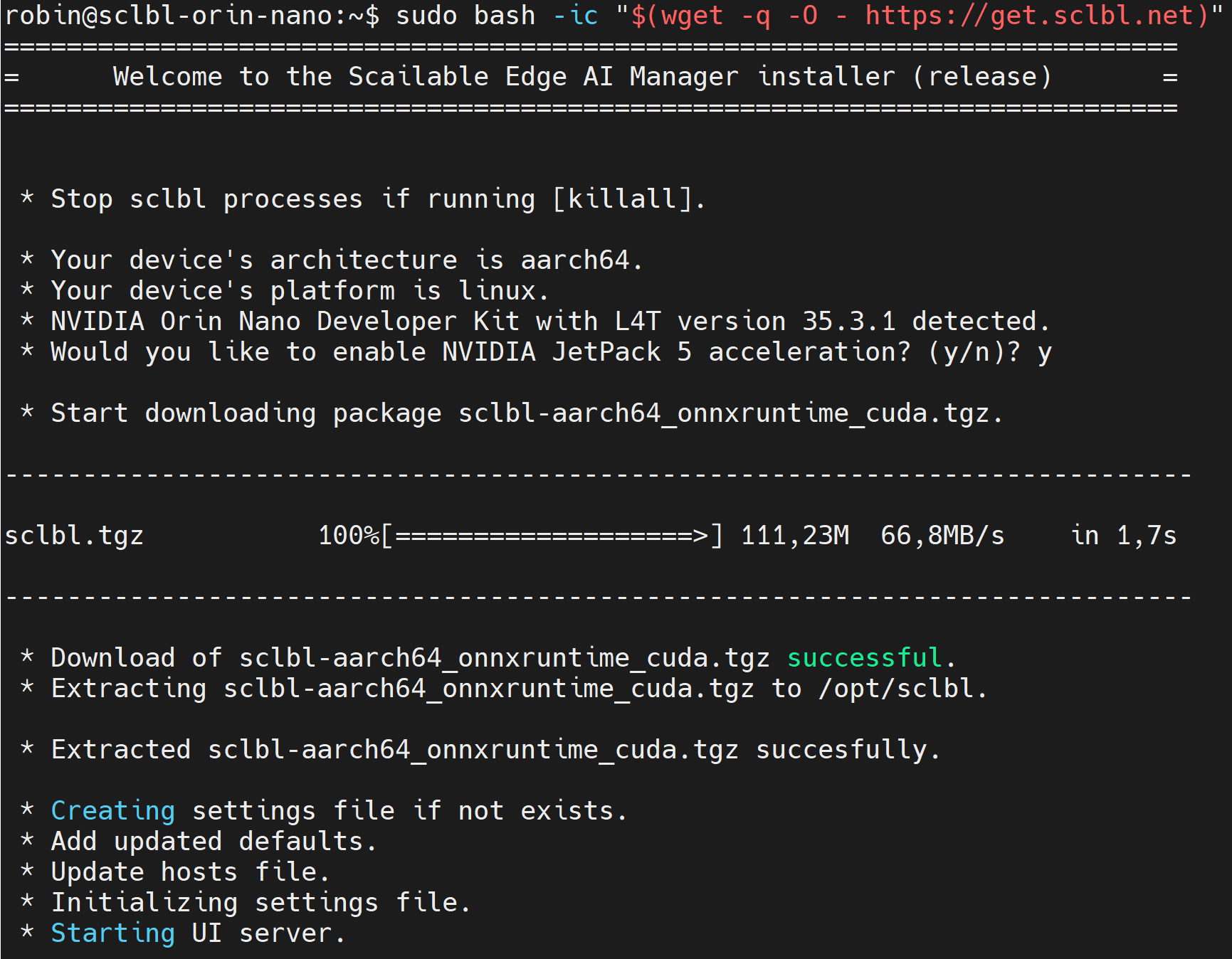

- Visit our documentation for the AI-BLOX to learn how to install and setup the Scailable AI manager on AI-BLOX devices.

- Reach out to our sales, or contact our support directly via our chat, to get your hands on an AI-BLOX device.

- Visit the AI-BLOX website.

Why We Are Joining Network Optix

Today, the entire Scailable team is joining Network Optix, Inc., a leading enterprise video platform solutions provider headquartered in Walnut Creek CA, with global offices in Burbank CA, Portland OR (both USA), Taipei Taiwan (APAC HQ), Belgrade Serbia, and shortly in Amsterdam (EU HQ). Network Optix, since its founding, has set out to “solve” video […]

Scailable supporting Seeed NVIDIA devices

We are excited to announce support for Seeed’s NVIDIA Jetson devices. The AI manager, and all our edge AI development tools, can now readily be used on Seeed devices. As we all know, edge AI solutions often start from creative ideas to optimize business processes with AI. These ideas evolve into Proof-of-Concept (PoC) phases, where […]

From your CPU based local PoC to large scale accelerated deployment on NVIDIA Jetson Orin.

Edge AI solutions often start with a spark of imagination: how can we use AI to improve our business processes? Next, the envisioned solution moves into the Proof-of-Concept (PoC) stage: a first rudimentary AI pipeline is created, which includes capturing sensor data, generating inferences, post-processing, and visualization of the results. Getting a PoC running is […]